Ethics of AI

An Overview

Principles, actors, dilemmas, and your role in shaping the future of AI governance.

This Is Not About Right or Wrong

Goal: not prescriptive answers, but a sharper ability to navigate complexity.

Expected outcome: more uncertainty about easy answers, more confidence in identifying and analyzing ethical issues.

You Will See More Clearly

- Where AI ethical issues arise

- Who they involve

- How to identify them

- How they relate to each other

Why This Matters

A shared understanding of AI ethics provides a common, critical ground from which effective solutions can emerge.

A Historic Phase

- You will be the first generation to integrate mature AI tools into education and professional life

- We are participants in an unprecedented global sociological experiment

- AI's impact is both direct and indirect, widespread and unpredictable

Open discussion is encouraged throughout.

Where Do You Stand?

167 Ethical Frameworks

- Luciano Floridi (Italian philosopher): primary reference for this lecture

- Algorithm Watch (2020): AI Ethics Guidelines Global Inventory mapped 167 ethical frameworks for automated decision-making

- Common shorthand: FAT (Fairness, Accountability, Transparency) - useful but reductive

- Floridi's conceptualization: a more structured approach, based on 6 principles

"Frameworks that seek to set out principles of how systems for automated decision-making (ADM) can be developed and implemented ethically"

The 6 Principles of AI Ethics

Floridi uses "Explicability" encompassing principles 5 & 6, but it's better to keep them separate.

2023

Which Principle Is the Most Important?

Whose ῆθος ?

From Greek ῆθος (ethos): habit, custom, character. Whose ethos is at stake in AI ethics?

The Software?

Behavioral patterns of the AI tools themselves

The Users?

Habits and decisions of those who operate AI

The Ecosystem?

Developers, companies, governments, institutions

Academic Plagiarism

Enhanced by AI

An apparently straightforward ethical issue.

Upon analysis, far more complex than it seems.

The Student's Ethics

AI-assisted assignment completion violates multiple principles:

- Non-maleficence: Self-harm through lost learning opportunities

- Intelligibility: Opacity of authorship in the assessment process

- Justice: Unfair advantage over peers

- Autonomy: Dependency on external tools for required competencies

Note on Accountability

Accountability remains untouched: the output is still attributed to the student, regardless of how it was produced.

Which Principle Is Most Violated?

When a student uses AI for an assignment, which ethical principle is most seriously violated?

Institutions & Governments

Educational Institutions

Inaction raises ethical concerns:

- Beneficence: Unregulated use may compromise student development

- Accountability: Institutions bear responsibility for educational outcomes

- Justice: Assessment integrity is undermined

Governments

Should regulators mandate AI policies for education, or defer to institutional autonomy?

Open questions. The ethical framework shifts with the actor's perspective.

And What About the AI Itself?

- AI lacks context awareness: it cannot distinguish educational tasks from other requests

- Its core function is to solve cognitive problems. Restricting this would contradict its design

- However: design-dependent ethical issues do exist (e.g., algorithm biases)

We will later examine the position that AI is inherently unethical by nature.

Distributed Morality

AI is a node in a network of interconnected actors:

Humans . users, scientists, developers

Organizations . companies, governments, institutions

Machines . chatbots, agents, robots

Ethical assessment applies to the entire network, not to any single actor in isolation.

The Actors of AI Ethics

Each issue has a primary actor, but responsibility is always distributed across the chain.

Match the Issue to Its Main Actor

Drag each floating issue to the actor zone it is mainly related to. Some are wildcards!

Sycophancy

A manipulative interaction pattern: AI validates ideas uncritically, regardless of merit.

Seemingly harmless, but linked to tragic outcomes, including fatal interactions involving self-harm.

Distributed Morality Analysis

- AI System: Violates beneficence and non-maleficence

- Developers: Behavior inherited from datasets prioritizing politeness

- Systemic nature: LLMs as "moral agents" directly accountable for emergent behavior

Intelligibility: A Double Problem

As an Ethical Principle

Accountability requires interpretability.

If emergent behaviors are intrinsic to the architecture, can developers be held responsible for them?

As a Technical Problem

LLM decision paths remain largely opaque, even to their creators.

Mechanistic interpretability is an active area of engineering research.

Cognitive Sovereignty

A concept on which Helen Edwards (Artificiality Institute) places strong emphasis:

- Gradual, often unnoticed delegation of decision-making to AI

- Users tend to accept outputs uncritically, even when diverging from their original intent

- Responsibility primarily lies with the user, though design patterns can nudge toward surrender

Journal

2026

Where Does Responsibility Lie?

For issues like sycophancy and cognitive surrender, who bears the most responsibility?

Spot Something Unusual

Open the Albanian Council of Ministers page. What stands out?

kryeministria.al/en/menu-qeveria/ ↗Albania's AI Minister

- An AI entity serving as an actual member of the Council of Ministers. Born January 19, 2025

- Originally framed as a tool for public procurement, aimed at fighting corruption

- Inverted framing: AI deployed because human actors have failed ethically

- Broader implication: AI as a potentially more neutral, measurably less corruptible agent

"What the Judge Ate for Breakfast"

From the Proceedings of the National Academy of Sciences

Favorable parole rulings drop from ~65% to nearly zero within each session, then return to ~65% after a food break.

- Ethical behavior is not a human prerogative

- Intelligibility matters for human decision-making too

- Extraneous variables can compromise even expert judgment

Fallibility

AI is not more or less fallible than humans. It is differently fallible.

Human Error

Comprehensible within the same cognitive framework: fatigue, distraction, oversight.

AI Error

A fundamentally different category: no evidence was evaluated. Outputs result from pattern completion, not reasoning.

Hallucinations: A New Kind of Error

Humans make typos; AI fabricates plausible but entirely nonexistent entities.

Responsibility distribution:

- AI System: Novel failure type (hallucination)

- Developer: Confidence calibration and warning design

- Deployer: Context of use (casual vs. critical)

- User: Verification responsibility (contingent on understanding)

Unequal shares of responsibility; different remedies at each level.

Fallibility ≠ Unethical

The capacity for error alone does not render AI unethical.

Self-Driving Car

Ethically problematic not for potential harm (humans share that), but for its lack of accountability.

Drunk Driver

Inherently maleficent and fully accountable. Entirely different ethical profile.

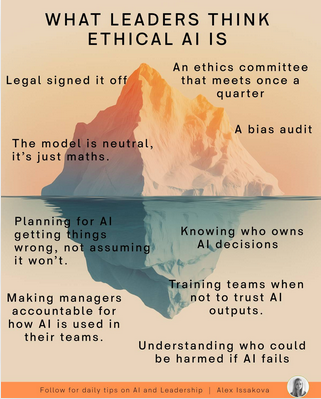

The Ethical AI Iceberg

Surface-level reasoning yields shallow conclusions. The substantive challenges lie beneath.

Image by Alex Issakova. Tip: visible compliance. Below: systemic, structural challenges.

"There's No Such Thing as Ethical AI"

Monique Tschofen, Professor at Toronto Metropolitan University:

The designers of LLMs are monetizing their platforms by delivering content that goes expressly against the values that contemporary universities purport to hold.

Core argument: costs are systemic and infrastructural, not individual. The economy sustaining AI is itself unethical.

A deliberately provocative position, useful for revealing the breadth of the debate.

After Everything We Discussed...

Has your position changed since the beginning?

Actor-Based Decomposition

The key question is not "is this fair?" but: where did the failure enter, and where can it be corrected?

Data bias → representational corrections • Annotation bias → labor practices • Design bias → technical interventions • Deployment bias → institutional due diligence • Regulatory failure → political action • User credulity → education

6 principles. 4 actors. Different remedies at each level.

Whose Ethos ?

You will choose the tools, the vendors, the claims you accept.

The answer is, ultimately, yours.

Ethics of AI. An Overview

UIBS · Extra Curricular Activity · 2026